Your PMS Integrations Are Working. Your Data Strategy Isn't.

PMS platforms are becoming more open but most multifamily operators still lack a unified data layer across their systems

PMS platforms are becoming more open but most multifamily operators still lack a unified data layer across their systems

Today's Thesis Driven newsletter was guest written by Remen Okoruwa, Co-Founder and Chief Executive Officer of Propexo, a data infrastructure company focused on multifamily real estate. Propexo builds automated pipelines from multifamily operational tools into operator-controlled data warehouses.

A recent Thesis Driven survey asked operators and vendors to rate the major property management systems on openness. The results should give the multifamily industry pause: no platform scored above 6.5 out of a 10-point scale, and the lowest averaged just over 3. Those scores came despite real moves by the major platforms in recent years. Yardi shipped an MCP connector for Claude. AppFolio launched a marketplace of integrated tools. Entrata overhauled its API program and gave developers a sandbox environment for the first time. The industry press covered each as a sign of progress. But despite all of it, operators still can't get a unified view of what's happening across their portfolios.

The conclusion from Thesis Driven editor-in-chief Brad Hargreaves was direct: "None of it, however, appears to have meaningfully moved the needle on how the industry experiences these platforms day to day."

The data is moving, it just isn't connecting. And for operators trying to understand what's happening across their portfolio, that distinction is the whole problem. Even if every PMS became perfectly open tomorrow, most operators still wouldn't have a data strategy; they'd have better plumbing.

Getting data out of a system and knowing what to do with it are two different problems. Most of the industry has been focused on the former while the latter has gone largely unaddressed. Multifamily operators have a data ownership problem, and with AI now forcing the issue, that gap is getting expensive. The operators pulling ahead have figured out that the answer is building a data layer they own and control, not waiting for their PMS vendor to solve it for them.

In this letter we cover:

Four methods move data in multifamily today, and each carries a limitation that vendors rarely advertise up front. APIs are built for developers and rarely serve the needs of BI teams trying to make sense of portfolio data. Scheduled reports are read-only exports shaped by whoever designed the vendor's dashboard. SFTP transfers arrive on the vendor's timeline, but not on yours. Read-only database access is the most powerful option but rarely offered, typically showing up as a six-figure add-on buried in contract negotiations.

Even on the highest-scoring platforms in the survey, the structural problem persists: each tool's data lives in its own world, with its own field definitions and its own logic for what "vacant," "occupied," or "made ready" actually means. The PMS knows about leasing, the maintenance platform knows about work orders, and the CRM knows about leads, but none of them know about the resident as a whole person whose history spans all three.

The PMS vendors have a reasonable counterargument. Both Yardi and Entrata have renewal and work order modules, and they'll point to that coverage when you raise the question. But if you're running HappyCo for inspections, EliseAI for resident communications, Snappt for screening, and Funnel for leasing—and plenty of operators are running exactly this stack—none of that data lives in the PMS. Chat records and sentiment signals from EliseAI have no natural home there, so they rarely get logged, and neither Yardi nor Entrata can see or query them. The full picture of what's happening at a property, let alone across a portfolio, is split across systems that have never shared a schema.

That fragmentation has a direct cost. The questions that actually drive portfolio decisions require data from multiple systems, consistently structured and accessible in one place. No single tool can answer any of them, and the reason goes deeper than reporting. Data ownership means your organization controls where operational data is stored, how it's structured, who can access it, and whether it survives a vendor switch. In most multifamily portfolios today, vendors control all of that.

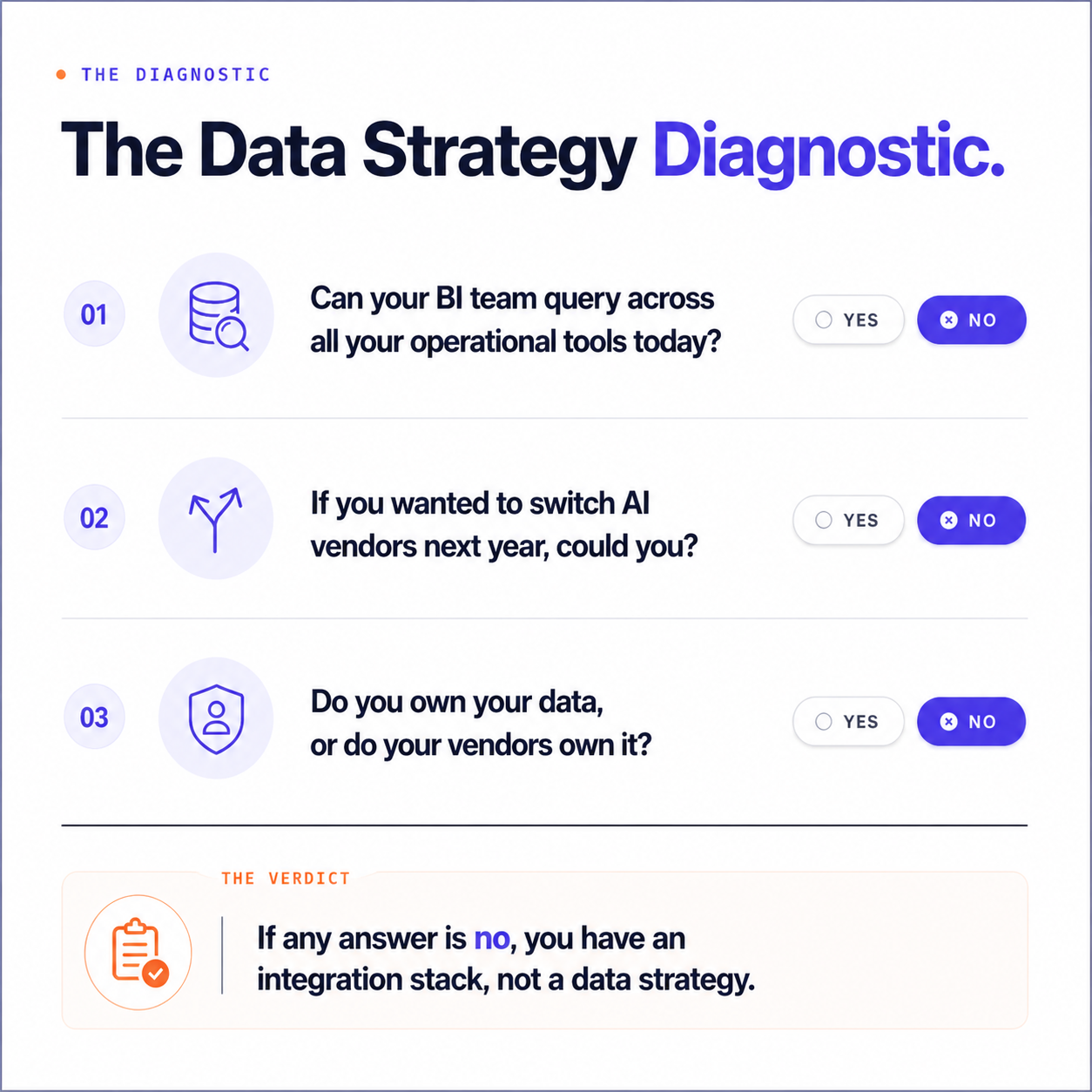

Before moving on, consider where your organization stands:

If any answer to these is no, the problem isn't your integrations. It's your architecture.

AI adoption in multifamily is accelerating, but the data on how those investments are actually performing tells a more complicated story. According to MLQ's State of AI in Business 2025 report, 95% of AI pilots fail, with the inability to integrate AI with key tools and datasets cited as a major contributor. Real estate is no exception. JLL's 2025 Global Real Estate Technology Survey found that while 90% of CRE companies are now piloting AI, only 5% have achieved all of their program goals. The bottleneck, across both studies, is the same one this piece has been describing: whether firms have the data infrastructure to actually make AI work.

The failure rate is predictable when you understand what these tools are actually working with. An AI tool pointed at fragmented, unstructured operational data across six vendor silos doesn't produce insight; it produces confident-sounding answers built on incomplete information, which in some ways is worse than no answer at all.

The survey also noted that AI has made platform openness a "far higher priority" for operators, and that's accurate as far as it goes. But openness is a prerequisite, not a solution. An open API gets data out of a system. A data strategy governs where it goes, what format it arrives in, and who controls the warehouse it lands in. Operators who have built a unified data layer before deploying AI can evaluate and adopt new tools as models improve without rebuilding their infrastructure from scratch. Those who haven't are making a different kind of bet: that their current vendor's roadmap will be good enough, for long enough, that it won't matter.

The foundation is a data warehouse you control. Snowflake, Databricks, BigQuery, Microsoft Fabric: the platform matters less than the principle. Your data needs a home that belongs to you, not to a vendor relationship that could change at the next contract renewal.

From there, operational tools feed into it on a consistent schedule and in a normalized structure: PMS data, CRM data, maintenance records, accounting exports, leasing activity. Consistent schedule means automated pipelines running daily or more frequently, not a quarterly CSV pull that the BI team has to clean by hand. What that means in practice: a lease in Yardi and a lease in Entrata resolve to the same data structure in the warehouse, with the same field names and the same relationship to a unit record. Without that normalization layer, the data hasn't been centralized. It's just been moved to a different mess.

The more advanced version is a dedicated, authoritative data layer that serves as the firm-wide source of truth, where operational tools integrate with it, AI tools query it, and the BI team works from it directly.

This is already happening. JPMorgan Chase, one of the most aggressive AI adopters in any industry, has over 450 AI proofs of concept in development and onboarded 200,000 employees onto its internal AI platform in under a year. None of it works without the proprietary data layer underneath. Katie Hainsey, the bank's Head of AI/ML, has been direct: making the firm's data "AI ready" through investment in data products and infrastructure is non-negotiable. The AI tools get the headlines; the data layer is what makes them work.

Rukevbe "Rukus" Esi, SVP and Chief Digital Officer at AvalonBay Communities, has organized the company's entire technology stack around the same principle. "Flexibility and control genuinely matter to us,” he says. “We don't want to be beholden to any single vendor's roadmap, and we need to be able to pivot quickly without sacrificing continuity for the business." The data layer, he adds, is "the one thing that has to remain consistent regardless of what platforms or solutions sit around it."

As AI tools mature, that logic only gets stronger. "When your data is clean, owned, and well-structured, operators can generate insights directly or expose data to other services on their own terms rather than waiting on a vendor to build that capability for them," Esi says. AvalonBay's approach is not unique to their scale—any operator with portfolio-level ambitions can adopt it. Most just haven't gotten there yet.

The case for a unified data layer is easier to make in the abstract than to fund internally. Two operator examples illustrate both the payoff and the friction.

Daniel Paulino, formerly VP of Marketing at Bozzuto, ran into a problem most multifamily marketing teams recognize but rarely solve: a single prospect appearing as multiple separate leads depending on which platform captured the interaction. A renter who clicked an ad on Tuesday, toured on Thursday, and applied the following week appeared as three separate leads in three separate tools. Bozzuto had almost no visibility into which channels were actually driving signed leases, because the prospect journey fragmented the moment someone crossed from one platform to another.

Paulino secured funding and purchased data layer software to resolve the identity problem. The problem became apparent quickly: no major data platform had a native connector to any multifamily PMS, not to RealPage, not to Yardi, not to Entrata. Multifamily was too niche for platforms built around Salesforce and Google Analytics. Bozzuto hired a marketing analytics consultancy to build the data feeds manually. The project recovered visibility into lead attribution, but the path exposed a familiar gap: no major data vendors have built for multifamily, and the purpose-built connectors that close that gap are only now beginning to emerge.

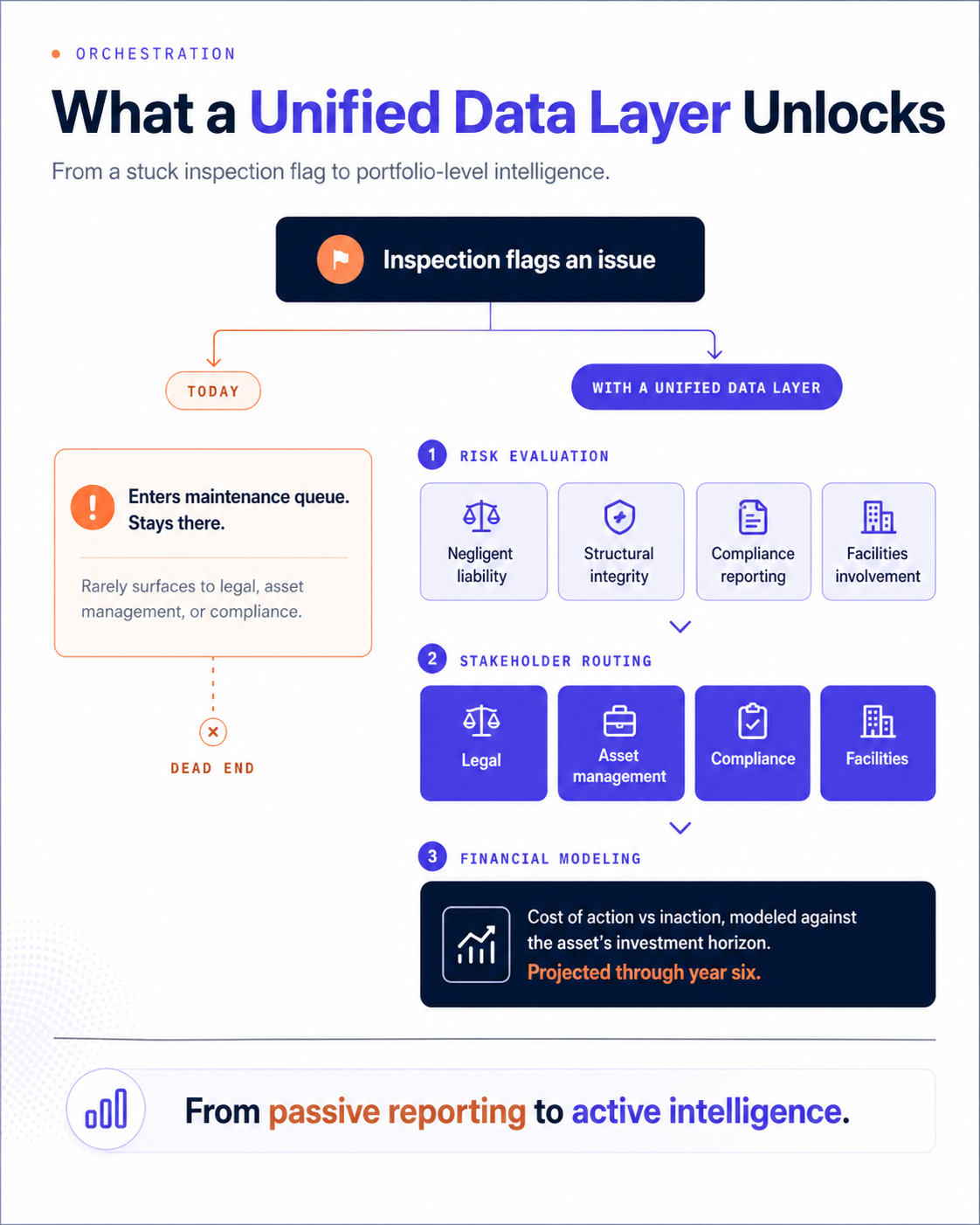

A second example comes from an NMHC Top 10 manager working with Propexo to prototype an inspection-triggered orchestration system. Today, when an inspection flags an issue, it enters a maintenance queue and stays there, rarely surfacing to legal, asset management, or compliance, even when the finding carries significant financial or regulatory implications. The vision is to replace that siloed handoff with a system that evaluates each flag against multiple risk dimensions—negligent liability, structural integrity, compliance reporting, facilities involvement—and routes findings to the right stakeholders.

The system would also model the financial impact of action versus inaction against the asset's investment horizon, projecting what an unaddressed issue costs by year six and whether it can be safely deprioritized in the near term.

None of it is possible when inspection data, financial projections, asset plans, and compliance records live in separate systems. The data warehouse is what makes that kind of cross-functional intelligence feasible, and without it, most flags never reach the people who need to act on them.

The deeper payoff is structural. A shared data layer changes how information moves through an organization. Instead of teams scanning weekly reports that rarely change, critical updates surface when they are relevant to the people who need to act on them. The inspection flag that carries liability risk, the maintenance pattern that predicts a move-out: these reach the right people at the right time. That’s the difference between passive reporting and active intelligence.

Outside of real estate, the problem of fragmented operational data was solved years ago. In technology, financial services, healthcare, and retail, automated connectors pull data from operational tools into centralized warehouses where it can be queried, analyzed, and fed into AI models. This is standard enterprise infrastructure, and it has been in production for over a decade. Fivetran built an entire business around it, offering hundreds of pre-built connectors so organizations can centralize data without building custom pipelines.

Nobody built it for multifamily. The connectors that matter here, Yardi, RealPage, Entrata, HappyCo, EliseAI, Funnel, don't exist in those general-purpose catalogs because multifamily was never a visible enough market. The methodology is proven. The gap has always been industry-specific coverage: operational tools specialized enough that no general-purpose data infrastructure company ever prioritized them. Companies like Propexo are building to close that gap, with automated pipelines from multifamily operational tools into operator-controlled warehouses and a normalization layer built in.

The evidence is consistent. Ninety-five percent of AI pilots are failing; only 5% of CRE companies have achieved all their AI goals. The operators pulling ahead, like AvalonBay, built the data layer first. Even sophisticated teams like Bozzuto's marketing group had to hire consultants to manually build data feeds that should have been standard infrastructure, simply because the connectors didn't exist. The most ambitious use cases on the horizon, inspection-to-remediation orchestration and cross-portfolio risk modeling, require a warehouse underneath them.

The AI pilot that stalls because it couldn't access unified data, the marketing dollar that leaks because a prospect can't be tracked across systems, the inspection flag that never reaches the right stakeholder: these are not integration failures. They are data ownership failures, and the cost compounds with every quarter that passes.

The operators pulling ahead aren't waiting for their PMS vendors to solve this. They are standing up their own data warehouses, building or buying the connectors to populate them, and treating the data layer as strategic infrastructure they own and control.

The playbook exists and the tooling has caught up. The only remaining variable is whether operators act now or wait until the next failed pilot forces the conversation.

Covering the future of real estate and the people creating it