Deep Dive: Acres and AI Land Agents

Exploring the first agentic land search and reporting tools

Land data has never been as accessible as it is today. Basic parcel information previously scattered across various county and municipal websites is now readily accessible; developers can easily access a given site’s zoning, ownership history, physical characteristics, and much more from a dozen different platforms—a tremendous trove of data for those who know how to use it.

Fortunately, AI is making it far easier for developers to do things with those large data sets. Over the past few years, AI models have gotten very good at processing and querying the kinds of data now available to acquisitions teams. While “publicly accessible data on a map” as a software product is quickly being commoditized, doing interesting things with that data is still in its early days—and the race is on to build agentic tools that don’t just present data, but structure and analyze it in novel ways.

Today's letter will explore the future of agentic AI in land search through a deep dive into Acres, the Arkansas-based land data and AI platform. Acres has been pushing the boundaries of agentic land search, including the release of a natural language site search tool beyond anything I’ve seen in the market to date.

We’ll discuss the evolution of the parcel data category, explore Acres’ latest release, and dive into how agentic AI will transform the real estate acquisitions game over the next 18 to 36 months.

The Parcel Data Arms Race

The past five years have seen the emergence of readily accessible public parcel data as a standard input for real estate development. Zoneomics, Regrid, and a growing roster of companies have digitized and structured parcel boundaries, zoning codes, ownership records, and tax assessments, making them widely accessible through APIs and mapping interfaces. Developers and land brokers who once relied on county assessor websites and personal relationships to piece together site information now have most of it available at scale.

While that has been helpful, it has also been commoditized. The underlying data is public, and the number of companies offering some version of "structured parcel data on a map" has grown to the point where simply possessing publicly-accessible data is no longer a meaningful differentiator.

Instead, alpha is created by those who either (a) bring proprietary data to the table or (b) have a unique strategy for using the data at hand.

The widespread availability of data has, in turn, unlocked new approaches to real estate acquisitions. Developers are no longer limited to evaluating the same marketed sites on LoopNet or waiting for a broker to bring them an off-market deal. They're screening hundreds of off-market parcels against structured datasets, filtering by zoning, acreage, proximity to infrastructure, and entitlement history, and surfacing sites that would have taken weeks to find manually. The game has shifted from browsing listings to querying large datasets.

But the user interface hasn't kept up with the potential of this approach. Most land search tools still present the same toggles and switches you'd find on a 2005-era Zillow interface: set your acreage range, pick a zoning category, draw a radius, scroll through results. Truly agentic workflows, where an AI agent interprets a complex, multi-variable query and does the work of screening, ranking, and analyzing sites, have remained on the horizon. And that is what Acres is trying to change.

Farm to (Data) Tables

Acres is hardly your typical proptech company. Based in Fayetteville, Arkansas, it was started by Carter Malloy, the scion of an Arkansas farming family who originally built the data tool as an internal platform for his land investment business, AcreTrader. I wrote about farmland investing in an early Thesis Driven letter, featuring AcreTrader as one of several tools democratizing access to farmland investments. (As a fellow Arkansas kid, I may have some hometown bias for Malloy’s business. As they say, sooey.)

AcreTrader launched about eight years ago, eventually deploying hundreds of millions of investor dollars into farmland. As Malloy explained, "As we were analyzing sites, we kept running into the same problem every day: lack of available information, clarity, no single source of land data. We had eight different point solutions, none of which were very good."

So the team built an internal data platform to solve the problem for themselves.

Over time, Malloy and the Acres team realized that the data infrastructure they had built to support their own capital deployment was more valuable than the asset management platform itself—and more broadly applicable than they'd initially imagined. Like Slack, which famously started as an internal communications tool for a game studio, the internal tool became the product. Malloy and his team recognized that the data platform was the most compelling part of the business and they doubled down on it. The company sold its land investment business in mid-2025 to focus 100 percent on data and AI.

When I played around with Acres a few years ago, it was obviously shaped by its rural land sales origins. The platform had compelling data on soils, land history, and timber composition, the kind of information that makes a land wonk's heart sing but probably wasn't the most relevant dataset for a suburban homebuilder evaluating infill sites. But it gave them insights into the power of proprietary data, and over time they began layering in custom data sets not available elsewhere: sewers, detailed satellite photos, and more.

But perhaps more importantly, the Acres team made an early bet on agentic AI—culminating in something I've anticipated for the past 18 months but hadn't seen in the wild until now: natural language land search.

Beyond Dials and Knobs

Existing land search tools have made data more accessible and layered in more datasets over time. But the UI remains fundamentally human-powered, the same filter set found in your typical ILS search portal. “If I can ask Claude Code to build a website in plain English,” asked Malloy, “why can’t I ask a land search site to find a very specific set of parcels?”

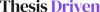

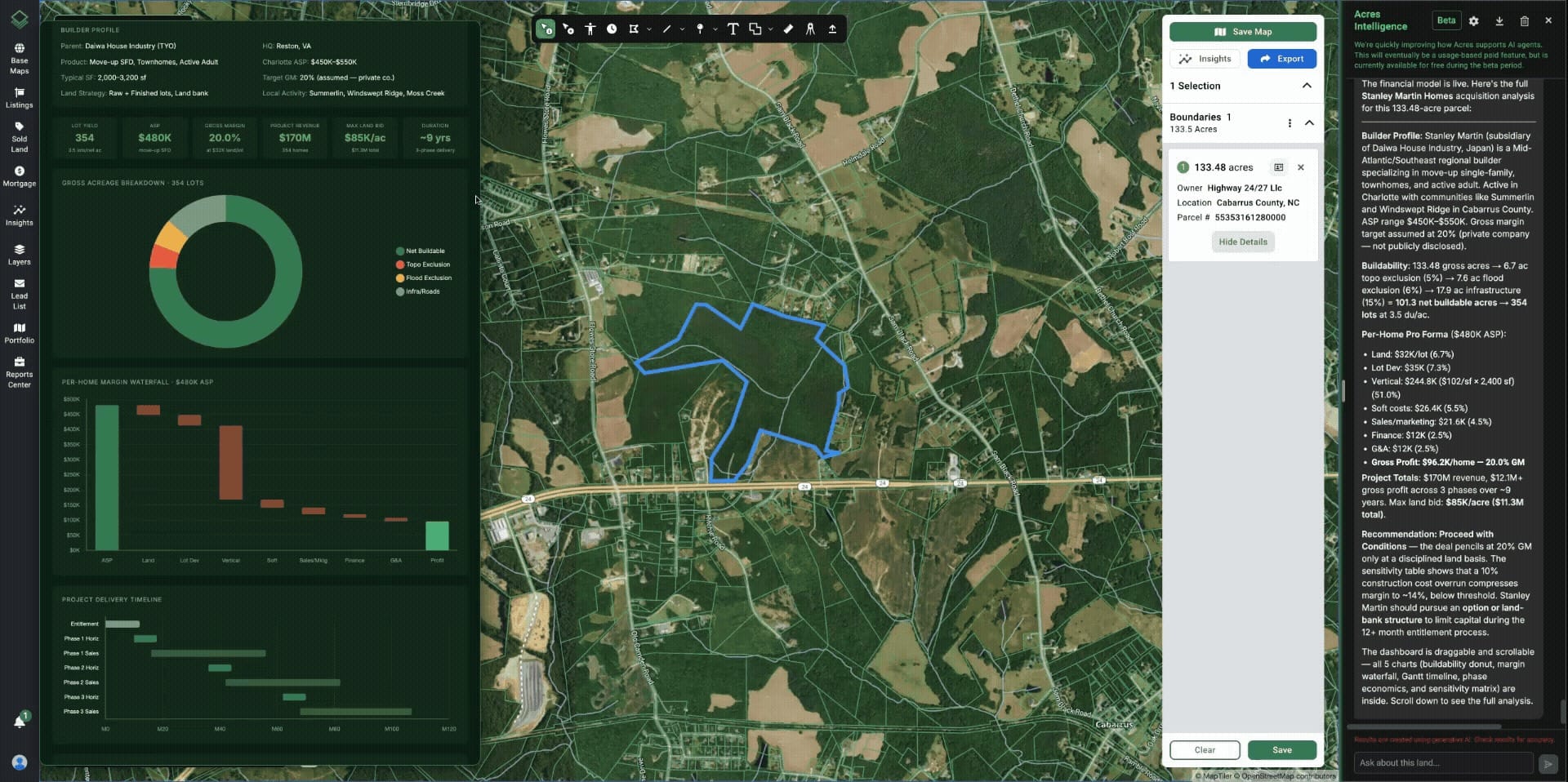

For example, consider the following prompt: “Show infill parcels for Toll Brothers product to potentially develop within 5 minutes of an HEB near Round Rock, Texas.” With this prompt, the Acres AI agent automatically identifies and force-ranks parcels with acceptable flood, topographic, zoning, and infrastructure conditions (sewer / water), mapping and ranking those within 5 minutes of a HEB, as illustrated below.

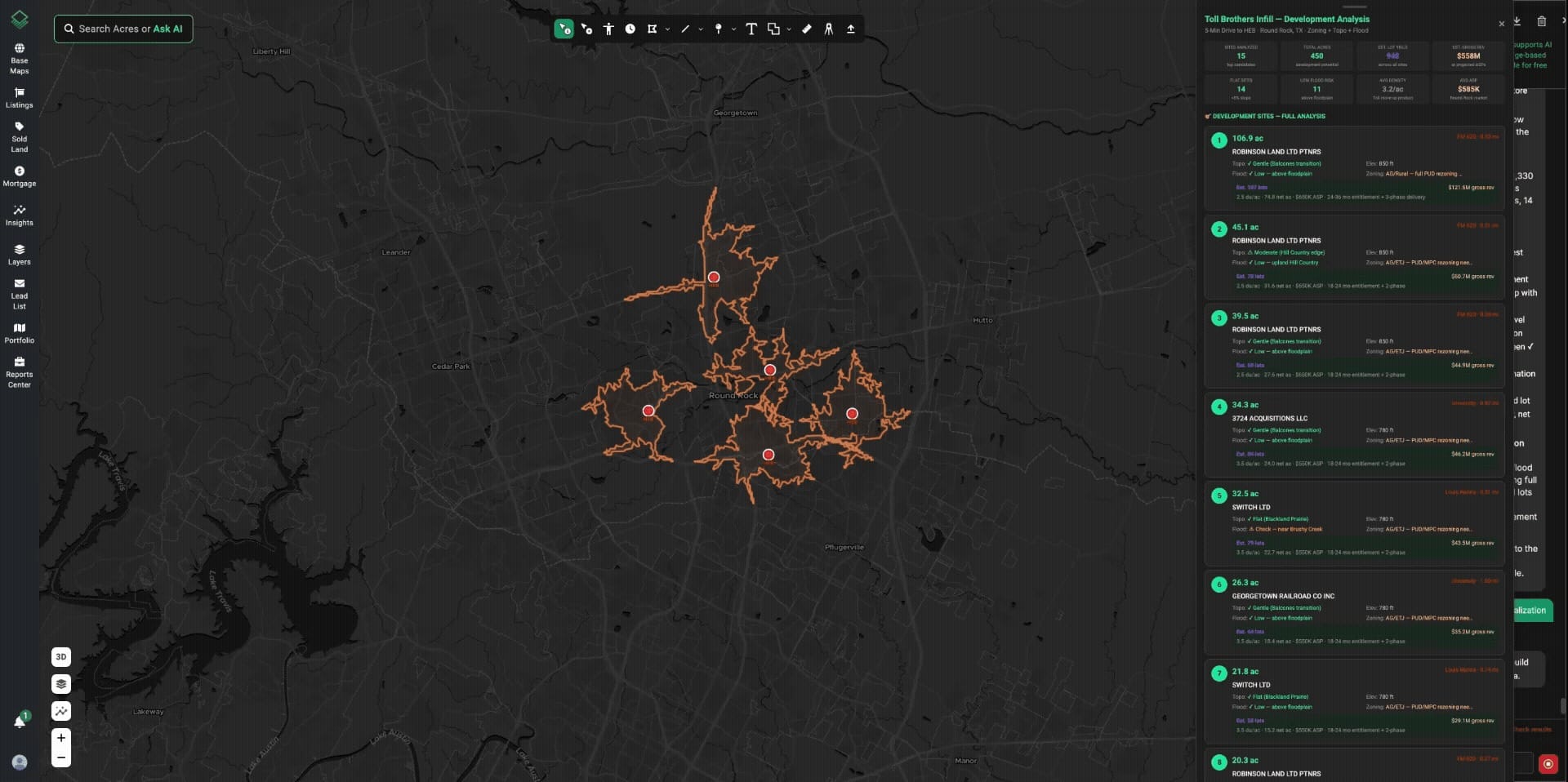

In another example, a Pittsburgh infill developer used Acres to take 60,000 publicly-owned parcels and narrow them down to the single best cluster for a partnership with the city. The model weighs proximity to recently-upgraded sewer and water lines, location within desirable neighborhoods, and the topographic realities of building in a city famous for its hills. Instead of handing builders a spreadsheet of 60,000 lots, the city can deliver a defensible shortlist where the infrastructure is already in the ground, the demand is already there, and the sites are actually buildable at a production-home cost basis.

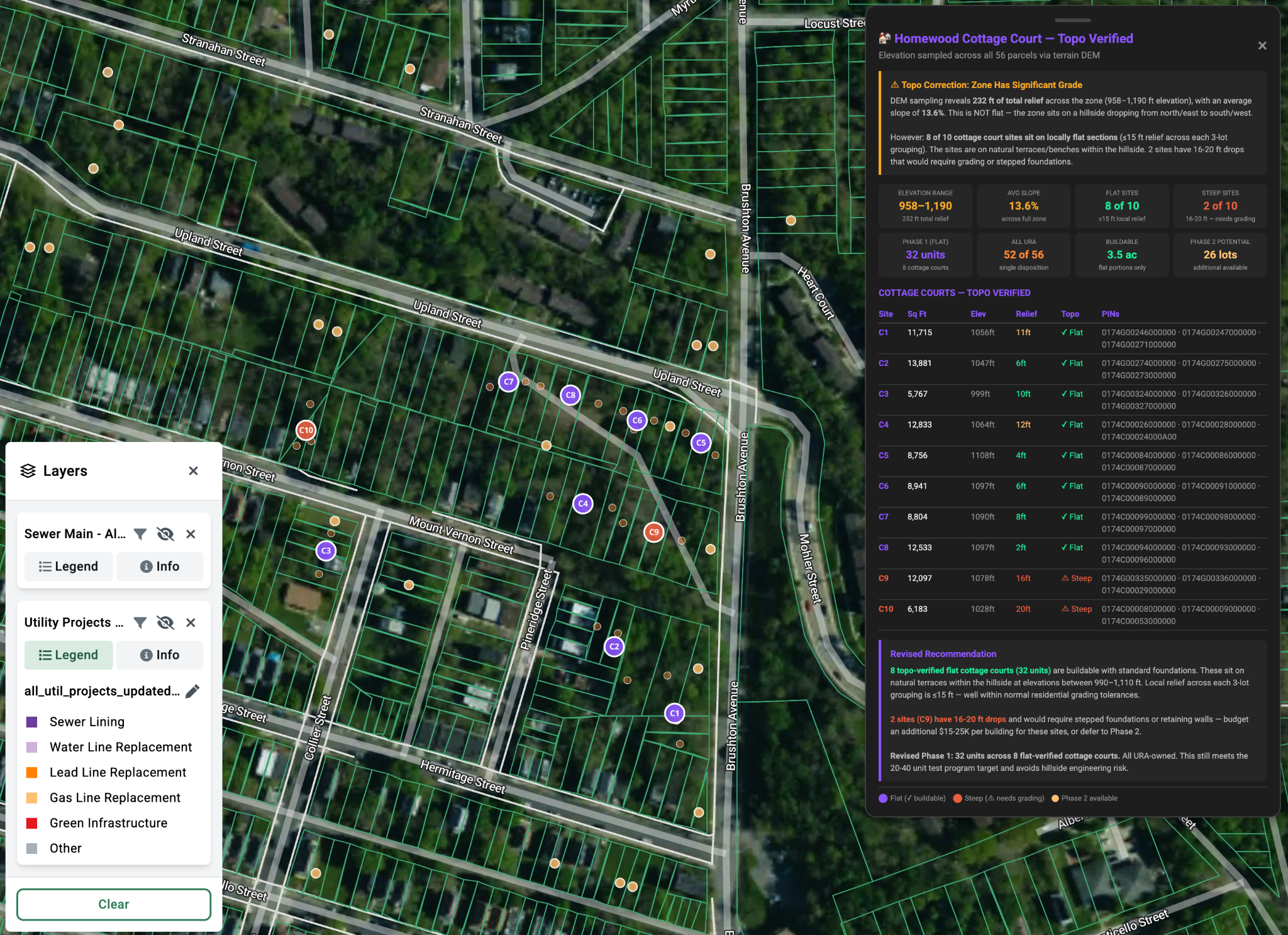

These natural language tools also simplify submarket analysis. For instance, Acres’ lot value trends report looks across proprietary homebuilder index data to identify raw and finished lot transactions, producing interactive data tables and maps. “It takes only a few minutes of computation to generate an up-to-date analysis of lot trends, including land and home sell-through analyses,” says Malloy.

Acres is also digging deeper into the underwriting process. Once sites are surfaced, agents can do much of the other work involved in vetting them. "After clicking a parcel, you can easily run a quick feasibility analysis to determine buildable area, timelines, margin sensitivity, and "gotchas" that may impact the project like zoning and infrastructure considerations,” explains Malloy. “This one can accelerate your site selection consideration window from days to a few minutes.”

The key difference from existing tools isn't just that AI is involved. It's where the workflow starts, beginning from a natural language query that captures the full complexity of what a developer is actually looking for—including qualitative criteria that don't fit neatly into a dropdown menu.

Search Revised

We've written before about the evolving analyst role in commercial real estate: less desk work, more field work that agents can't do. Less time pulling comps and building spreadsheets, more time vetting the output of AI and doing the on-the-ground diligence that no model can replicate. Natural language search accelerates the ongoing compression of behind-the-desk site sourcing work.

But commercial real estate isn't the only place where "dials and knobs" search dominates. The consumer-facing listing services should be paying attention too.

Zillow currently presents no fewer than 21 separate filters, many with five or more options each. Apartments.com is doing somewhat better; they've put a basic AI-powered search interface up front. But it's not actually using AI to power the search itself. It's using AI to set the filters. The next screen just shows the AI's best guess at what filters the user intended to set, and the user is right back in the traditional toggle-and-scroll paradigm.

Ironically, building a natural language search for apartment listings should be easier than building one for large-scale institutional land purchases. The data is more standardized, the variables are fewer, and the user intent is more predictable. But the prize for figuring this out for institutional buyers and homebuilders is much larger, which is why the land search category is where the most interesting work is happening.

The growing role of AI tools puts a premium on data quality and completeness. When a human is manually evaluating five sites, a bad data point is an annoyance. When an AI agent is screening five hundred sites, bad data cascades through the entire analysis. "If you show me bad data and great AI, I'll tell you which one wins," Malloy observed. The larger the data set being analyzed, the worse the impact of even low error rates. If an analyst is evaluating 10,000 sites with a 2% error rate, that’s 200 flawed analyses—many of which might float up to the top of an automated screening filter.

Feedback loops matter too. The queries themselves are a signal of what data people need. Acres saw through user queries that sewer and utility data is particularly useful to homebuilders and greenfield developers—and that it isn't broadly available through public sources—so they went and built that as a proprietary dataset.

The search layer becomes a discovery mechanism for the data layer, creating a flywheel that compounds over time: better search tools reveal data gaps, proprietary data fills those gaps, and the enriched dataset makes the search tools more useful.

The Ends of the Spectrum

The growing simplicity of these tools means that value in the development process is shifting toward the ends of the spectrum. On one end, there's the super-strategic: creating novel investment theses that can be quickly validated through AI-powered search and underwriting. "What if we targeted city-owned vacant parcels near transit in mid-size Rust Belt cities?" is a thesis that used to take a team of analysts weeks to test. Now it takes a well-constructed query and a few minutes of review.

On the other end, there's the super-tactical: actually operating the asset, managing the construction, navigating the entitlement process, building the relationships with municipal stakeholders. These are the parts of development that no AI agent is going to replace, because they require physical presence, human judgment, and local knowledge that doesn't live in any dataset.

The middle of the spectrum—the desk-based research, the comp analysis, the initial screening of sites—is exactly where AI agents are most effective. As these tools mature, the developers and investors who win will be the ones who can generate better theses at the top and execute better on the ground at the bottom, while letting AI handle the analytical work in between. The competitive advantage won't come from having access to the data, or even from having the best AI tools. It will come from asking better questions and doing better work on the ground once the AI has pointed you in the right direction.

-Brad Hargreaves