Computer Vision is Coming, and It’s Gonna Be Weird

Move over, LLMs. Computer vision is going to hit real estate even harder.

Move over, LLMs. Computer vision is going to hit real estate even harder.

Earlier this year, Ukraine gained the upper hand in its war against Russian invaders, a conflict increasingly fought with one-way kamikaze drones.

Throughout 2025, electronic warfare methods like signal jamming had made traditional drone combat challenging. The Russians responded to this by shifting to wired drones connected by thin fiber optic lines to a human pilot. While these drones eluded jamming methods, they had obvious limitations due to being physically linked to their operator’s base. They also left villages in eerie cobwebs of used fiber.

Ukrainetook a different approach. They invested in drones that could operate wholly autonomously, using computer vision and AI to identify, and destroy, targets in the field.

No signal? No problem. A human would pilot the drone into the general vicinity of targets, and AI would take over in the “last mile” where electronic countermeasures are strongest. Thus far this year, Ukraine has liberated more than 400 square kilometers of land at a time when the beleaguered nation was supposed to be on its back foot. Much of this progress came from its use of swarms of jam-proof, computer vision-powered drones clearing the way for more conventional forces.

The implications for warfare are not difficult to imagine. A small army of drones armed with computer vision ordered to seek and destroy any human within a pre-programmed geographic radius is something no country is prepared to face. It is a terrifying thought.

As a real estate publication, we won’t pull on that thread any further. But computer vision is perhaps the fastest-moving segment of the broad world of AI, and real estate—given its nature in physical space—will be transformed by it in ways we are only beginning to understand.

Today’s letter will survey today’s frontier use cases across development, construction, engineering, and operations, and where we’re headed from here.

Computer vision is not new, but its capabilities have taken dramatic steps forward in recent years. And for many in real estate, it has done so with much less noise and fanfare than large language models. Part of this is simply consumer accessibility: anyone can interact with ChatGPT or Claude’s natural language interface, whereas computer vision tools are rare in consumer use. Even AI image generation—which has far fewer enterprise applications than computer vision—is much more familiar to the average person given the popularity of AI photo editing and GPT’s mid-2025 Ghiblification meme.

Computer vision has also drawn less attention than natural language AI tools because it has historically been sold as a feature within bulky vertical AI software packages (inspection apps, leasing platforms, security systems) rather than a standalone product. But as foundation models improve rapidly, computer vision is breaking out of its vertical software wrapper. And it’s making sneaky inroads across real estate operations, acquisitions, and development. Consider a few examples:

Document Processing: Advances in AI have taken document processing to the next level, structuring the mountains of paper documents real estate operators touch. “Computer vision is much more than just OCR,” said Jason Wallis, CEO of SurfaceAI, a company using computer vision to assist operating teams. “Techniques like semantic chunking have enhanced LLMs’ ability to understand tables, checkboxes, and complex layouts that span pages, pushing the technology’s capabilities beyond reading basic text.” Suddenly, lease audits that once took hundreds of human hours can now be done in hours.

Construction monitoring: OpenSpace and Buildots are among the companies taking continuous site imagery—360-degree video from hardhat cameras, drone flyovers, fixed-position feeds—and turning it into structured progress data: percent-complete by trade, deviations from the BIM model, safety flags, and automated as-built documentation. Catching a framing error or a lagging subcontractor in near-real-time pays for the subscription many times over.

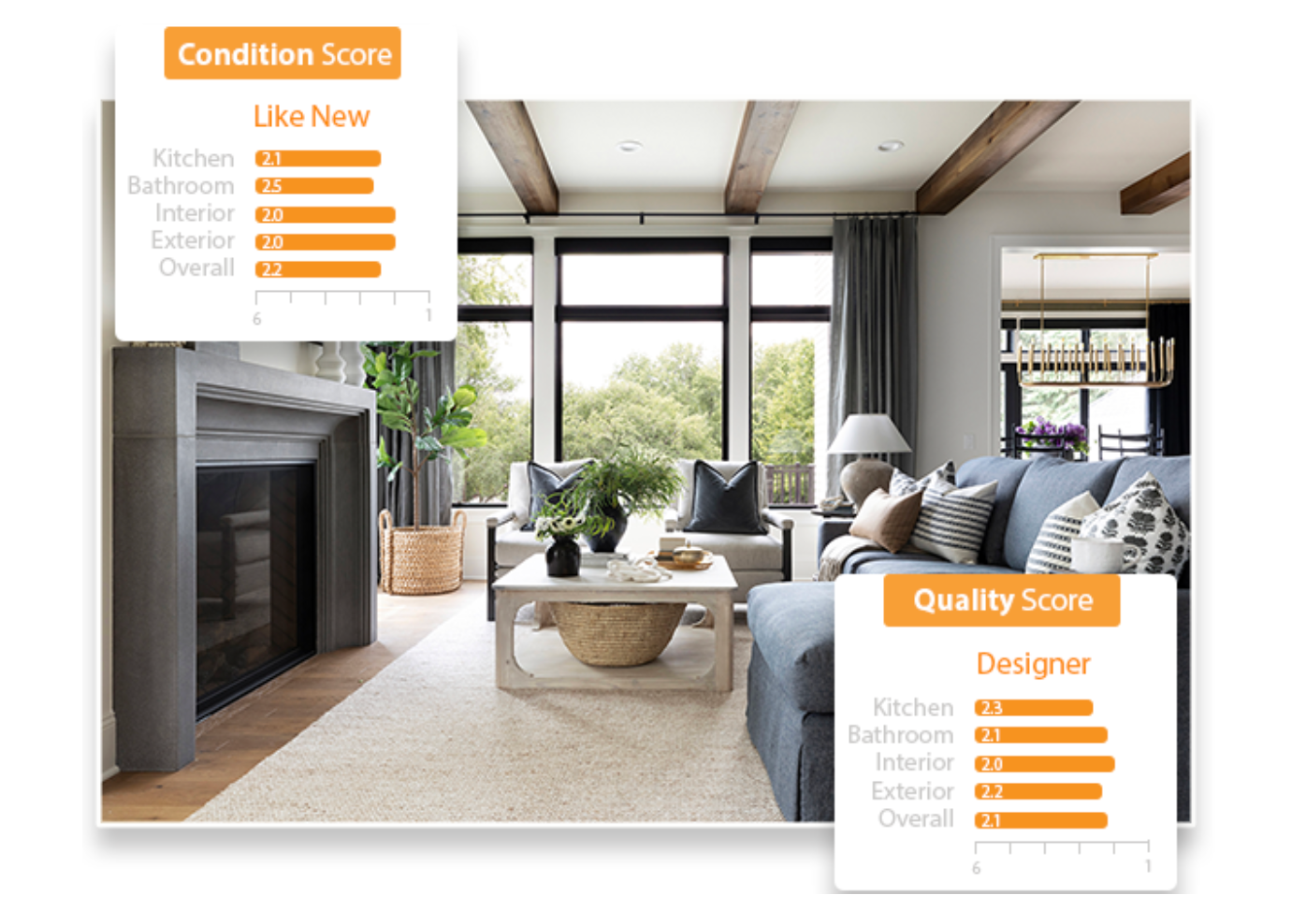

Inspections and quality scoring: Tools like FoxyAI and Restb.ai are taking photo libraries, floorplans, and listings and converting them into machine-readable attributes: condition scores, feature tags, renovation flags, and quality ratings. At their peak, iBuyers like Opendoor were power users of such tools—they needed to get quick-hit intelligence on a property’s quality and condition to make a rapid offer. In multifamily, this technology is making unit turn inspections simpler and more objective. “Unit inspection photos as well as work order request photos and videos can improve the accuracy of data on work orders and make it easier to sort through inspection photos,” said Wallis.

Security and Life Safety: The cameras already installed in lobbies, garages, and common areas are being upgraded from passive recording to active analysis. They’re recognizing residents for touchless entry, detecting tailgating at doors, reading license plates at the gate, and flagging accidents, loitering, or weapons in near-real-time. Memory care operators have become the clearest power users— in-room cameras catch resident falls the moment they happen, and the liability and clinical savings are significant enough that the technology has spread across most of the major senior living portfolios in just a few years.

The common thread through all these is computer vision technology extracting structured data from unstructured visual inputs. These inputs might be video (a security camera feed) or static (inspection photos of a house), but AI models are now remarkably good at recognizing patterns from any visual data set when given sufficient training data.. Five years ago, AI could recognize a house or a dog in a picture. Now, it can evaluate the finish quality of the house, flag likely soffit damage, identify specific furniture SKUs, and note that the dog is a 10-year-old Labrador Retriever in a playful mood.

Real estate has spent the past 15 years installing cameras and playing around with drones. But we’re just beginning to recognize their full value.

The implications of computer vision are best understood not by going application-by-application but instead starting with the technology’s capabilities and working backwards.

One, every human will be instantly recognizable. This is already the reality (and has been for some time in more sophisticated corners of the security sector), but facial recognition and identification as a technology is going to break containment and be broadly available to every multifamily property manager and nosy neighbor in the next five years. Property managers will have a cornucopia of data on their residents’ guests, behaviors, and lifestyles, should they want it. Criminals will be far easier to catch and prosecute, assuming DAs and judges care to do so.

Similarly, behavior will be read as easily as credit scores. Stress, intoxication, gait abnormalities, signs of cognitive decline, rough health status are all inferable from a few seconds of video. A prospect will be scored on tenancy risk by the time they finish touring the kitchen. Operators will gain a level of behavioral visibility into their residents that has no precedent in American housing, and enforcement of fair housing law against visual profiling will lag by years.

Two, every asset will have optical memory. The cameras are already in the lobby, the hallway, the garage, and the gym. In five years those feeds will be continuously indexed by event, object, and person, making every building a fully searchable archive of who did what where. "Show me everyone who entered the gym between 6 and 8 this morning" becomes a query. To take it a step further, when structured data can be generated automatically from any visual feed, analysis can incorporate data once inaccessible. "Which buildings in my portfolio have the longest morning wait times at the main elevator, and how has it changed over time?”

Three, visual lookalike modeling will add a new dimension to site selection. Point a model at Google Street View – which has visual data going back to 2008 – layer in local data on rent growth, crime, retail demand, and demographic drift, and you can train it to recognize what an appreciating, successful street actually looks like. A retail entrepreneur with five strong locations and three mediocre ones will soon be able to run two analyses that don't exist today: an address-level lookalike that returns 50 candidate sites matching the winners and excluding the losers across every measurable variable, and a street-view lookalike that returns sites visually resembling the built environment around the winners.

Finally, the real estate labor market for "trained humans looking at things" will compress. Inspectors, appraisers, leasing agents, security guards, underwriting analysts, construction QA, insurance adjusters: every role whose core function is a trained human producing judgment from visual input will take a hit. Real estate is among the most visually-mediated industries in the economy, which means the employment compression driven by computer vision will land harder here than almost anywhere else.

While the first-order applications of computer vision are complicated enough, the second-order implications are hard to fathom.

One, the tradeoff between privacy and convenience will intensify dramatically. For consumers, there’s a tangible benefit to being recognized wherever you go: preferences are remembered, doors are unlocked, and machines do things without being asked because they know what you want before you even know you want it.

This will only be amplified by the West’s decline into a low trust society. Restrooms are locked because addicts shoot up in them, bikeshare programs require multiple layers of authentication lest someone throw the bikes into a river, and fraud-plagued multifamily operators stack more verification hoops in front of prospective tenants because the cost of letting in a bad actor is so high.

In a low-trust environment, being instantly recognized by computer vision as a trustworthy individual who will not trash the hotel room will yield growing dividends. Opt-out – assuming you can – and be subjected to a growing litany of cumbersome, embarrassing, TSA-style tests to verify you are worth trusting. Laws that limit collateral or other financial tests, such as security deposit maximums, will only exacerbate the problem.

A complicating factor is declining trust in visual provenance just as visual data becomes more important. When any photo can be generated and the generated ones are indistinguishable from real ones, the industry's entire evidentiary stack — listing photos, inspection reports, insurance claim photos, construction as-builts, court exhibits — becomes unreliable. Expect two or three years of growing fraud, followed by a hard migration to cryptographically-signed-at-capture imagery. Every photo taken before that shift becomes forgeable-by-default, which has implications for historical claims, old litigation, and any property record built on pre-2027 photography.

Finally, the biggest winners won't be the companies building computer vision tools. They'll be the firms that already own the years of footage those tools need to be useful. Google is an obvious winner, as is Tesla – no one else has better exterior, neighborhood-level inputs. ILS platforms with massive libraries of asset photos as well as construction technology platforms training proprietary models on customers’ visual data inputs will also do well.

The question is whether visual data feeds are powerful enough to erode real estate’s “local knowledge” advantage. While none of this replaces relationships, the nuances about specific neighborhoods, blocks, and sites become easier to access via visual queries. How much “local knowledge” could be replicated by a few months’ worth of 24/7 site footage analyzed by a model trained on comparable footage of thousands of other sites?

While I’ve previously expressed confidence that the “field” aspects of the asset manager’s job will be safe from AI, I now only believe that to be true on a 3-5 year horizon. Beyond that, all bets are off.

–Brad Hargreaves

Covering the future of real estate and the people creating it